Multi-Turn Tool Use with RL

Think → Code → Check → Answer

Introduction

Reinforcement Learning (RL) has firmly established itself as a key ingredient for moving Large Language Models beyond simple next-token prediction. While pre-training and Supervised Fine-Tuning (SFT) teach a model to imitate human language patterns, RL provides the tools to optimize its behavior for a specific goal e.g. helpfulness, safety, or complex problem-solving. It’s the mechanism that lets us define what a “good” outcome is and reward the model for achieving it.

In my previous posts, I’ve explored algorithms like REINFORCE and GRPO for this kind of optimization, but mostly in the context of single-turn tasks. A personal research interest is to explore how RL could optimize for long-horizon user satisfaction, which means how satisfied a user feels after a whole interaction, not just a single prompt. This immediately means moving beyond single-turn responses. Most interesting problems aren’t solved in one shot; they require a multi-turn conversation. A simple example looks like this:

User: “I want to cook something for dinner.”

Agent: “Happy to help! What kind of-cuisines are you in the mood for?”

User: “Hmm, maybe something Italian or Mexican.”

Agent: “Great choices. Do you have any dietary restrictions, like vegetarian or gluten-free?”

User: “Nope, anything works.”

Agent: “Understood. Given that, here are two highly-rated recipes...”This is a simple dialogue. However, optimizing for long-horizon behavior often involves multi-turn conversations where the model plans, asks for missing context, tries an approach, checks the result, and revises before committing to a final answer. Each turn does work: it elicits constraints, commits to a plan, and makes the next step easier to evaluate. So, the conversation alone often isn’t enough. Many tasks need external tools such as search, a browser, a calculator, code execution, a table parser, or a notebook etc. to bridge from intentions (“plan a weekend”) to verifiable outcomes (“the museum is open; the route fits in 6 hours”).

A tool-using agent doesn’t just know things; it can do things. The most natural example is Deep Research: a multi-turn process that uses browsing and retrieval to gather evidence, compare sources, resolve disagreements, and synthesize a response.

Here’s how multi-turn with tool use feels for a deep research setting:

User: What’s a practical recipe to reduce coffee acidity without losing flavor?

Assistant (plan): I’ll (1) survey credible sources for brew chemistry, (2) extract technique-level claims (grind, temp, ratio), (3) check for consensus, and (4) propose a ranked, testable checklist.

Assistant → Tool (browse): Search brew chemistry + chlorogenic acids; open 3–4 authoritative sources; note recommended temperature ranges.

Assistant (reflect): Two sources converge on 92–94 °C with coarser grind; one warns about over-extraction at low temps.

Assistant → Tool (browse): Add “cold bloom” and “bypass” techniques; collect reported TDS/CE findings.

Assistant (synthesize): Draft checklist (coarser grind, 92–93 °C, 1:15–1:16 ratio, 30 s pre-wet, bypass 10–15%). Provide a quick “why it works,” note trade-offs, and cite sources.This is the core pattern: think → use tool → observe → update plan → decide. It’s inherently long-horizon and directly tied to user satisfaction.

Current Work

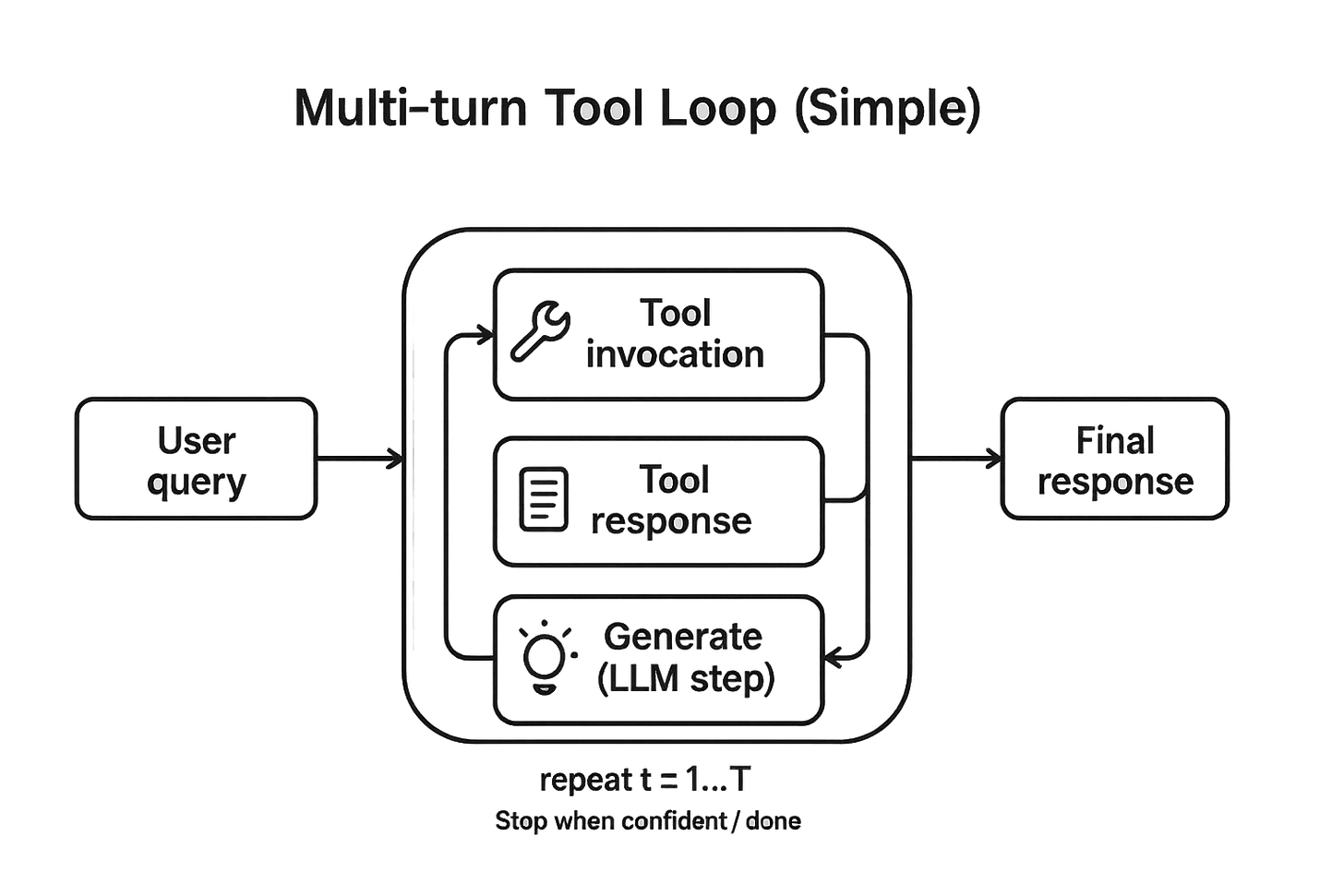

It would be a fun exercise to build a deep research agent from scratch. But for a simple experiment, it’s too many moving parts at once: multiple tools, subjective grading, fuzzy rewards. In this work I choose a much more modest goal, motivated by ByteDance’s ReTool: use one tool (a Python executor) to solve math problems in a multi-turn loop. Math is a great sandbox because it has verifiable outcomes i.e. either the final predicted number matches the ground truth or it does not. This makes reward design straightforward. With a single, well-scoped tool, the engineering surface is small and we can concentrate on the behaviours that matter: when to call the tool, what to compute, and how to use the result to repair a line of reasoning before giving the final answer.

Concretely, I fine-tune a small open model (Qwen2.5-1.5B) to interleave natural-language reasoning with short code executions. I start with a brief SFT pass to teach the interaction ritual (plan → code → read → continue), then apply RL (GRPO) to optimize multi-turn tool use for correctness on a verifiable math benchmark. The rest of the post walks through that recipe - what the episodes look like, how reward and KL control shape behavior, and where the approach shines (and stumbles) when scaled down to a 1.5B model.

Supervised Fine-tuning (SFT)

The first step in teaching a model to use tools to solve a problem is to teach it the style and the ritual of being a tool-using agent. As is the standard practice, I used supervised fine-tuning for this phase. The goal is schema fluency, not cleverness. This mirrors ReTool’s “cold-start” idea of code-augmented traces so RL time isn’t wasted learning I/O.

Data

For SFT, we require a dataset containing prompts and responses that follow the intended workflow: propose a plan, fence a Python call, read the output, continue reasoning, and finalize clearly. Curation of such a dataset is a challenging endeavour in itself, usually involves state-of-the-art frontier models and human labelling. Luckily, ByteDance team released such a data with the ReTool work. We use the JoeYing/ReTool-SFT data.

The samples in the data have the responses interleaving textual reasoning with tool calls (python code) as well as outputs of the code execution. An example looks something like this:

Okay, so I need to find out how many students at Dala High School are not taking any of the three classes: Math, Science, or English. The total number of students is 152. Let me see...

...

<code>\n```python\n\nM = 100\nS = 94\nE = 57\nM_S = 73\nM_E = 24\nS_E = 27\nM_S_E = 22\n\ntotal_in_any = M + S + E - M_S - M_E - S_E + M_S_E\nstudents_neither = 152 - total_in_any\nprint(students_neither)\n```\n</code>

<interpreter>\n3\n</interpreter>

The code returns 3, indicating that 3 students take none of the classes. To verify, ...

<code>\n```python\n\nonly_M_S = 73 - 22\nonly_M_E = 24 - 22\nonly_S_E = 27 - 22\n\nonly_M = 100 - only_M_S - only_M_E - 22\nonly_S = 94 - only_M_S - only_S_E - 22\nonly_E = 57 - only_M_E - only_S_E - 22\n\ntotal_verify = only_M + only_S + only_E + only_M_S + only_M_E + only_S_E + 22\nprint(total_verify)\n```\n</code>

<interpreter>\n149\n</interpreter>

The verification total is 149, so students not taking any classes are 152 - 149 = 3, confirming the initial result.\n\n<answer>\n\\boxed{3}\n</answer>The codes are inside <code></code> tokens and the code outcomes are inside <interpreter></interpreter> tokens, answers being within <answer>\n\\boxed{}\n</answer> is fairly standard.

Qwen models have a tool-using template which expected the conversation to be split into multi-turn messages with system, user, assistant, and tool roles. You provide tool_calls as per a tool schema. The tool role message contains the outcome of running the tool.

messages = [

{”role”: “user”, “content”: “What’s the weather in San Francisco?”},

{

“role”: “assistant”,

“content”: None,

“tool_calls”: [

{

“id”: “call_1”,

“type”: “function”,

“function”: {

“name”: “get_weather”,

“arguments”: ‘{”location”: “San Francisco, CA”}’

}

}

]

},

{

“role”: “tool”,

“tool_call_id”: “call_1”,

“name”: “get_weather”,

“content”: “72°F, sunny”

},

{

“role”: “assistant”,

“content”: “The weather in San Francisco is currently 72°F and sunny.”

}

] Applying the Qwen chat template on the above conversation results in:

<|im_start|>system

# Tools

You may call one or more functions to assist with the user query.

You are provided with function signatures within <tools></tools> XML tags:

<tools>

{”type”: “function”, “function”: {”name”: “get_weather”, “description”: “Get the current weather”, “parameters”: {”type”: “object”, “properties”: {”location”: {”type”: “string”}}}}}

</tools>

For each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:

<tool_call>

{”name”: <function-name>, “arguments”: <args-json-object>}

</tool_call><|im_end|>

<|im_start|>user

What’s the weather in San Francisco?<|im_end|>

<|im_start|>assistant

<tool_call>

{”name”: “get_weather”, “arguments”: {”location”: “San Francisco, CA”}}

</tool_call><|im_end|>

<|im_start|>tool

72°F, sunny<|im_end|>

<|im_start|>assistant

The weather in San Francisco is currently 72°F and sunny.<|im_end|>To work with the standard Qwen chat template, I processed the training data into this format. We have 2k samples in this dataset which I spilt into 1800 train and 200 validation examples.

Training

The model is trained for 10 epochs with effective batch size of 16 and the max generation length of 16384. I did full fine-tuning (no LoRA) as our base model is quite small and fits well within the memory constraints of the colab notebooks that I used to conduct this experiment. This results in a model that is good at imitation. It knows how to use the tool but doesn’t necessarily know the optimal strategy for doing so. It might make inefficient calls or get stuck in loops.

After the model generates a tool call (marked by </tool_call>), standard text generation naturally stops because the next predicted token is typically <|im_end|> (the conversation end marker). However, this premature termination prevents the model from:

Receiving the tool’s execution result

Reasoning about the result

Making additional tool calls if needed

Producing the final answer

User: “What is 15 * 23 + 7?”

Model generates:

“Let me calculate this.

<tool_call>

{”name”: “code_interpreter”, “arguments”: {”code”: “15 * 23”}}

</tool_call>”

→ Generation stops here (predicts <|im_end|>)We need to continue the conversation by providing the outcome of the tool back so that the generation continues.

Full Episode

We implement a routine that orchestrates complete multi-turn dialogues between the model and tools. This function serves a dual purpose: it is used both during evaluation to measure model performance and during training via GRPO reinforcement learning to generate training trajectories on which the rewards can be calculated. This operates as follows:

Initialization: The episode begins with the user’s initial query, which forms the first message in the conversation history.

Iterative Generation Loop: The function enters a loop that continues until one of three termination conditions (

</tool_call>,<|im_end|>, or per-turn token limit is reached) is met:Assistant Turn Generation: The model generates a response based on the current conversation history. The generation stops when the model produces terminal conditions:

Token Tracking: All generated tokens are accumulated and corresponding log probabilities are stored. These are later used for computing the policy gradient in GRPO

Tool Execution: The system parses the assistant’s response for tool calls, if no tool call is detected, the episode terminates (final answer reached) but if a tool call is detected:

A Python code interpreter executes the requested computation.

The execution result is formatted as a tool response.

This response is appended to the conversation history as a new user message as

<tool_response>{{response}}</tool_response>.

Budget Enforcement: Each turn is capped at a pre-fixed number of tokens to prevent single-turn dominance and the total episode length is limited to a max tokens. Also enforced a maximum of 3 tool calls (4 assistant turns total) prevents infinite loops.

Episode End: Upon completion, we get the complete conversation history (user queries, assistant responses, tool results).

[ {’role’: ‘user’, ‘content’: ‘What is 123 + 456’}, { ’role’: ‘assistant’, ‘content’: ‘<tool_call>\n{”name”: “code_interpreter”, “arguments”: {”code”: “print(123 + 456)”}}\n</tool_call>’ }, { ’role’: ‘user’, ‘content’: ‘<tool_response>\n579\n</tool_response>’}, { ’role’: ‘assistant’, ‘content’: ‘Therefore, the answer is 579.\n\n\nTherefore, the answer is 579.<|im_end|>’ } ]This also produces the data required for GRPO update e.g. logprobs and the completion token Ids.

Reinforcement Learning

After supervised fine-tuning establishes basic tool-use capabilities, we apply Group Relative Policy Optimization (GRPO) to further improve the model’s performance through reinforcement learning. GRPO is a policy gradient method that normalizes rewards within groups of rollouts from the same prompt, reducing variance and improving training stability compared to standard REINFORCE. I have written about GRPO and REINFORCE in details in earlier posts. SFT teaches the model to imitate a correct reasoning trace. RL teaches the model to find a correct reasoning trace, even from a bad state. It learns to maximize the final reward.

Data

I used the GSM8K dataset as the training data, this is a collection of 7,473 grade school math word problems requiring multi-step reasoning. Each problem consists of a natural language question and a numerical answer. The dataset is well-suited for smaller models like the 1.5B we are using here. It can highlight the power of tool-augmented language models as the problems involve arithmetic operations (addition, multiplication, percentages) that benefit from calculator tools rather than mental math and the solutions often require breaking down problems into intermediate calculations, encouraging multiple tool invocations.

Rewards

We design a composite reward function that encourages not only correct answers but also appropriate tool usage and solution quality. Each episode receives a reward computed as the sum of three components:

Correctness Reward: The primary objective is producing correct answers. We define:

R_correct = { 2.0 if predicted answer equals ground truth 0.0 otherwise }Format Compliance Reward: To encourage well-formed tool calls, we reward syntactically correct tool usage:

R_format = { 0.5 if all tool calls are valid JSON and properly formatted 0.0 if any tool call has syntax errors }This reward component helps the model learn the mechanical aspects of tool invocation, reducing training time wasted on syntactic errors. The relatively small weight (0.5 vs 2.0 for correctness) ensures format compliance is encouraged but not prioritized over solution quality.

Efficiency Reward: To encourage concise solutions, we penalize excessive tool usage and verbose outputs:

R_efficiency = max(0, 1.0 - 0.2 × num_tool_calls - 0.0005 × num_tokens)This reward component balances two competing objectives: we want the model to use tools when helpful (avoiding calculation errors) but not excessively (maintaining efficiency). The coefficients are tuned such that appropriate tool usage (2-3 calls for complex problems) incurs minimal penalty while excessive tool reliance (5+ calls) is discouraged.

Training

We implement Group Relative Policy Optimization (GRPO), which normalizes advantages within groups of rollouts rather than across the entire batch. We use our full episode generation workflow to produce completions:

For each prompt:

Generate 4-8 episodes using run_episode() with temperature=0.7

Compute reward for each episode based on:

- Answer correctness

- Tool use appropriateness

- Solution efficiency

Calculate group-relative advantages

Update policy using policy gradient with collected log probabilitiesThis was trained for 100 steps with 16 effective batch size.

Implementation

Caveat: This might just be my naiveté or inexperience with TRL - let me know if there is a better way to do multi-turn with tool-use in TRL.

While the Transformer Reinforcement Learning (TRL) library provides a GRPOTrainerimplementation, I found it unsuitable for multi-turn tool-use scenarios and implemented a custom trainer. The core issue stems from how tool-augmented language models operate versus what standard RLHF assumes. Traditional RLHF training (and TRL’s implementation) assumes a simple single-turn structure:

Prompt: “What is 15 × 23?”

Completion: “Let me calculate: 15 × 23 = 345” [single generation, done]Policy gradient methods compute loss over the completion tokens, requiring log probabilities for each generated token. In single-turn scenarios, this is straightforward: generate once, collect log probs, compute loss.

However, tool-using models require multi-turn episodes with interleaved generation and tool execution:

Prompt: “What is 15 × 23?”

Generation 1: “Let me calculate. <tool_call>{code: ‘15*23’}</tool_call>” [stop]

Tool response: “<tool_response>345</tool_response>” [environment feedback]

Generation 2: “The answer is 345.” [stop, final answer]The “completion” for policy gradient purposes consists of both Generation 1 and Generation 2 concatenated together i.e. all tokens the model produced. However, these generations are separated by the tool response, which is:

Not generated by the model

Must be present in context for Generation 2

Should not contribute to the policy loss (it’s environment feedback, not model output)

TRL’s GRPOTrainer expects a dataset of pre-computed (prompt, completion) pairs and does not support:

Dynamic Episode Generation: TRL assumes completions are static and pre-generated. However, our training requires:

Generating episodes on-the-fly with the current policy

Executing tools based on model outputs

Continuing generation after receiving tool results

Collecting log probabilities across all assistant turns

This can be somewhat managed by the experimental

rollout_funcin the stable version.Multi-Turn Log Probability Collection: For policy gradients, we need log probabilities for every token the model generates. In single-turn scenarios:

# Single-turn (TRL handles this): output = model.generate(prompt) log_probs = output.scores # Log probs for this generationIn multi-turn scenarios with tool use:

# Multi-turn (TRL doesn’t handle this): Turn 1: output1 = model.generate(prompt) log_probs1 = output1.scores [tool executes] Turn 2: output2 = model.generate(prompt + tool_response) log_probs2 = output2.scoresTRL’s trainer does not provide a mechanism to accumulate log probabilities across multiple generation calls that are separated by external (tool) interactions.

Prompt-Completion Separation: For computing policy loss, we must distinguish between prompt tokens and the completion tokens. In multi-turn episodes, this boundary becomes complex:

Initial prompt: <user query> [prompt] Assistant turn 1: “Let me calculate...” [completion] Tool response: “<tool_response>345</tool_response>” [prompt for turn 2] Assistant turn 2: “The answer is 345.” [completion]For loss computation, we need:

Prompt IDs = Initial user query (tokens before any generation)

Completion IDs = Assistant turn 1 + Assistant turn 2 (concatenated)

But the full conversation for generating turn 2 includes the tool response, which is neither prompt (it wasn’t there initially) nor completion (model didn’t generate it). TRL’s trainer assumes a static prompt boundary and cannot handle this dynamic context evolution.

Our reward function requires access to:

Complete episode structure (all messages, including tool calls and responses)

Tool execution outcomes (did the tool run successfully?)

Final answer extraction from potentially lengthy output

Episode metadata (number of tool calls, token counts)

I tried using TRL’s GRPOTrainer with a custom rollout_func that returns episode data including a field for aggregated messages. However, when the reward functions received the data, the new field was missing - only prompts, completions, completion_ids, and trainer_state were passed through. It seems that TRL’s GRPOTrainer doesn’t forward arbitrary custom fields from your rollout function to the reward functions. It only forwards standard fields.

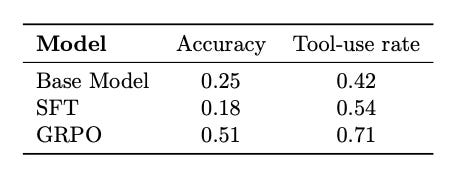

Results

For evaluation, I simply use the GSM8K test data. I didn't do a rigorous evaluation, purely ran the model on the test data samples and checked whether it got the right answer.

For each test question:

Generate single episode using run_episode() with temperature=0.2

Extract final answer from episode

Compare with ground truth

Measure accuracyI compared with the base model and the SFT model.

Note that this is quite different from the results reported in the technical reports. Qwen reports 4-shot average pass@k results, our setting is 0-shot. This is done for no reason other than quicker run. The goal of this work is to explore the use of tools for enhancing reasoning and long-term user satisfaction rather than state-of-the-art.

Models

The models are hosted on Huggingface hub.

Conclusions

In this work, we trained a small LLM to invoke a tool and leverage its output inside the reasoning loop. With a brief SFT cold-start to teach the interaction ritual and a GRPO phase to optimize behavior, the model learned to interleave natural-language planning with compact Python calls and to revise its plan when reality (the executor’s output) disagreed with its first guess. The experiment shows that multi-turn, tool-using behavior is learnable even at 1.5B scale. The policy becomes more deliberate about when to compute, uses shorter, single-purpose snippets, and exhibits useful self-repair. While the absolute ceiling is bounded by model capacity and data, the qualitative shift matters: we’re optimizing for outcomes, not just eloquence.

Big picture: this is a small, concrete step toward long-horizon RL for LLMs. By making execution part of the trajectory and rewarding the end-to-end outcome, we train the behavior we actually want: think → act → observe → revise → answer. That’s the core ingredient for deeper tasks like evidence-grounded research; the math sandbox is where we proved the loop works.

I’ll continue to explore this topic further and share my work in future posts.

If you find this post useful, I would appreciate if you can cite it as:

@misc{verma2025multi-turn-tool-use,

title={Multi-Turn Tool Use with RL

year={2025},

url={\url{https://januverma.substack.com/p/multi-turn-tool-use-with-rl}},

note={Incomplete Distillation}

}

++ Good Post, Also, start here stock market, AI research, Crash Courses, 100+ Most Asked ML System Design Case Studies and LLM System Design

Stock Market

https://open.substack.com/pub/stockmarketanalysis04/p/important-stock-market-post-04-which?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/stockmarketanalysis04/p/important-stock-market-analysis-which?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/stockmarketanalysis04/p/important-stock-market-post-02-understand?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/stockmarketanalysis04/p/important-stock-market-post-03-this?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

Crash Courses

https://open.substack.com/pub/crashcourses/p/crash-course-02-a-complete-crash?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/crashcourses/p/crash-course-01-a-complete-crash?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

AI/ML Research

https://open.substack.com/pub/airesearch04/p/ai-research-2-kimi-k2-thinking-a?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/airesearch04/p/ai-research-1-the-transformer-revolution?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

LLM System Design

https://open.substack.com/pub/naina0405/p/most-important-llm-system-design-b31?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://naina0405.substack.com/p/launching-llm-system-design-large?r=14q3sp

https://naina0405.substack.com/p/launching-llm-system-design-2-large?r=14q3sp

[https://open.substack.com/pub/naina0405/p/llm-system-design-3-large-language?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/naina0405/p/important-llm-system-design-4-heart?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

System Design

https://open.substack.com/pub/naina0405/p/system-design-tech-case-study-pulse-862?r=14q3sp&utm_campaign=post&utm_medium=web&showWelcomeOnShare=false

https://open.substack.com/pub/naina0405/p/system-design-tech-case-study-pulse-b3c?r=14q3sp&utm_campaign=post&utm_medium=web

https://open.substack.com/pub/naina0405/p/system-design-tech-case-study-pulse-135?r=14q3sp&utm_campaign=post&utm_medium=web

https://open.substack.com/pub/naina0405/p/system-design-tech-case-study-pulse-007?r=14q3sp&utm_campaign=post&utm_medium=web